Washington is Removing the Only Federal Guardrails on Healthcare AI. What Happens Next, and Who Protects Patients, is Up to You.

Remember, back in the day, when kids on bikes would yell, “Look, no hands!” only to hear their parents reply, “Look, no teeth.”? Healthcare leaders deploying clinical AI increasingly sound like those parents as vendors roll out new tools and features.

Somewhere right now, a clinical decision support tool is recommending a course of treatment. Its training data? The hospital never saw it. Neither could they independently verify its performance benchmarks. And the end user doesn’t control the tool’s maintenance schedule.

Under the HTI-1 “model card”, AI vendors at least have to disclose those inconvenient realities. But not for much longer, if HHS cements the deregulatory actions proposed in HTI-5, officially titled “Health Data, Technology, and Interoperability: ASTP/ONC Deregulatory Actions to Unleash Prosperity.”

The Guardrails Are Coming Off

The AI model card wasn’t perfect, but it was the closest thing healthcare had to a nutrition label for algorithms. Developers had to disclose exactly what was inside the black box via machine learning documents that provide context and transparency into a model’s development, intended use, training data, performance metrics, known risks, bias mitigation, and ongoing validation and maintenance processes.

That standardized transparency is now on the chopping block, along with basic risk-management obligations for predictive decision support interventions (DSIs), including those powered by AI. HHS’s stated rationale is: “no publicly available evidence” demonstrates that these requirements delivered measurable improvements in patient care. The proposal would eliminate or revise more than half of existing ONC certification criteria, cutting an estimated 1.4 million compliance hours for developers.

Major EHR and AI vendors have greeted HTI-5 as overdue housekeeping. Oracle’s Seema Verma called it “highly encouraging” that ASTP/ONC is finally reducing regulatory burden. In its public comments, Epic Systems bolstered the HHS case with internal data showing that in 2025, 46% of its customer organizations had zero users who ever accessed the source attributes in the workflow. Their collective message: model cards were largely bureaucratic theater that slowed innovation without clear upside.

Not everyone agrees. The American Medical Informatics Association (AMIA) cautioned that removing transparency criteria “could introduce unintended risks.” The Medical Group Management Association (MGMA) said that practices will now “assume inappropriate risk” and lose “the only consistent mechanism for evaluating AI tools they are purchasing.” Patient-safety advocates contend that clinicians and organizations are about to start flying blind in an environment where AI is already driving prior authorizations, ambient documentation, and predictive analytics at scale.

Public comments closed on February 27, 2026, with more than 6400 submitted. The final rule has not been issued, but the intent seems clear. The guardrails aren’t vanishing because the black-box problem in clinical AI has been solved. Rather, HHS has simply decided to stop requiring vendors to open the box at all.

Meanwhile, the States Are Forging a Regulatory Patchwork

Turns out that healthcare AI is not just an industry buzzword; it’s catnip for state lawmakers. While federal guardrails retreat, states are rushing to fill the void, creating exactly the compliance complexity that mid-market organizations dread. In 2025 alone, 250 AI-related bills were introduced, with 33 signed into law across 21 states. Several key measures are taking effect in 2026:

- Texas now requires written disclosure to patients whenever AI is used in their diagnosis or treatment (effective January 1, 2026).

- California mandates that generative AI developers disclose high-level training data sources (AB 2013) and prohibits AI systems from impersonating licensed healthcare professionals (AB 489).

- Colorado’s AI Act imposes governance, disclosure, and risk-management requirements on high-risk AI systems (phased implementation through June 2026).

- Illinois prohibits AI from making independent decisions in therapy or psychotherapy without licensed human oversight.

These laws recognize that patients and clinicians should know when AI is shaping care and how it works. But the picture is complicated by a White House executive order signed in December 2025 that directs federal preemption of state AI laws deemed “inconsistent” with national policy. What counts as inconsistent is not defined, setting the stage for regulatory whiplash and more litigation.

For managed care organizations (MCOs) operating across state lines, the compliance environment just became significantly more uncertain. National payers with armies of data scientists will adapt. They can build internal governance layers and absorb the risk. Smaller and mid-market organizations? Not so much.

Data Veracity as the New Governance Layer

Here’s the reality: organizations that depend on vendor-supplied model cards for AI governance were always building on borrowed ground. Model cards were a floor, not a ceiling. And that floor is being pulled up.

Post-HTI-5, organizations looking to deploy AI face a clear governance vacuum. How do you evaluate competing tools during procurement when standardized disclosures disappear? How do you assess whether a prior-authorization algorithm denying claims was trained on representative data? Can you spot the kind of data-quality failures that drove the OIG’s documented 13% incorrect denial rate in Medicare Advantage? How do you satisfy state transparency mandates if your vendor won’t (or can’t) disclose training methodology?

What CIOs and CTOs can do now:

- Ask the hard procurement questions. Post-HTI-5, demand evidence of upstream data governance, not just model cards that may never be read.

- Prioritize data infrastructure before AI pilots. A pristine foundation turns AI from a cost center into a reliable force multiplier.

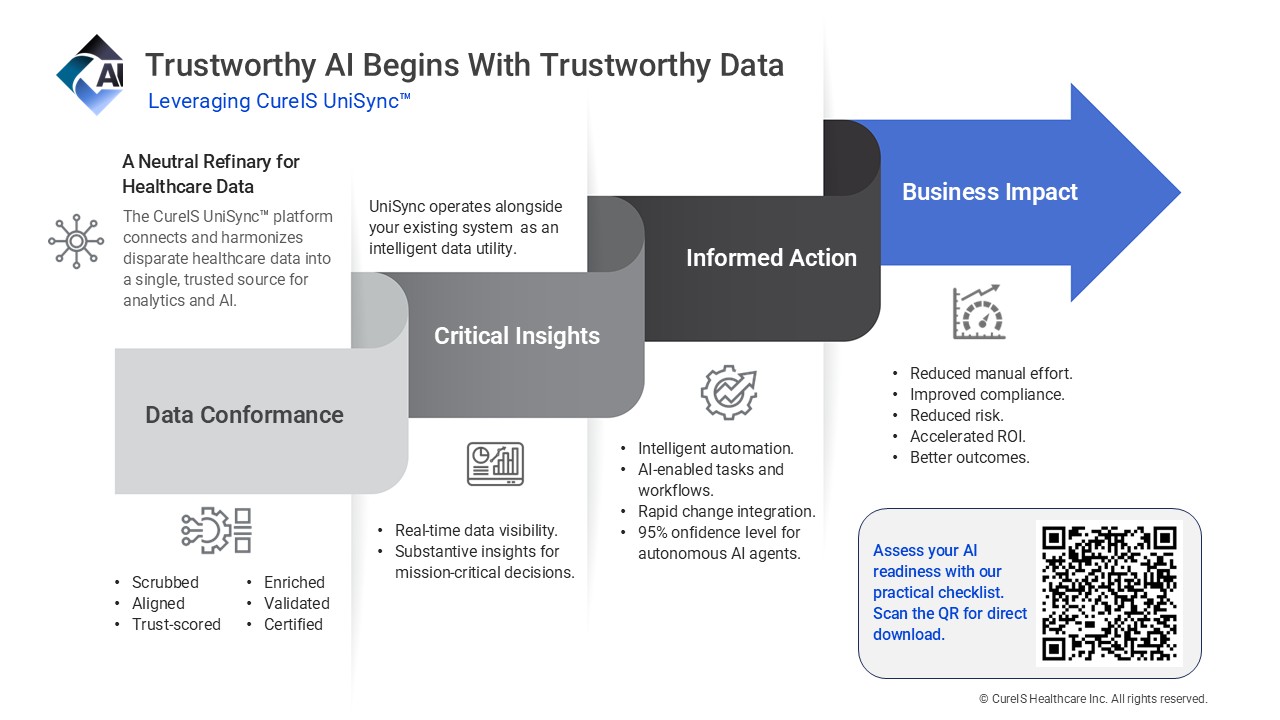

- Treat middleware as strategic insurance. Neutral, data utilities like CureIS UniSync™ provide the explainability and error prevention that federal rules no longer guarantee.

The organizations that will thrive are those that no longer depend on external mandates and instead own their data layer. When clinical and administrative data is clean, conformed, reconciled, and fully auditable at the infrastructure level, you gain the power to verify AI outputs against known-good sources of truth and detect algorithmic drift or degradation through data monitoring, and to make procurement decisions based on your own data-quality benchmarks rather than vendor promises.

This is what owning your data layer looks like in practice. And it’s what the UniSync platform enables.

“Mid-size organizations can utilize UniSync as a shortcut to an enterprise-level governance infrastructure without the enterprise price tag or system overhaul,” said CureIS Healthcare CEO, Chris Sawotin. “UniSync operates alongside your existing systems as a neutral “data refinery.” It cleans, normalizes, and enriches information before it ever reaches high-stakes AI models or adjudication engines.”

The Choice

Healthcare can wait for Washington to decide whether AI transparency still matters. Given the current trajectory, that could be a long wait.

Or it can invest in the data infrastructure that makes transparency a built-in feature rather than an external mandate. The organizations that do this now will be ready for whatever regulatory framework emerges – state, federal, or both. Those that don’t will be buying AI tools blind in an environment where nobody is required to tell them what’s inside.

The guardrails are coming off. The question is whether you’ve built your own.

Ready to build an AI-ready foundation that actually holds up post-HTI-5?

CureIS Healthcare has spent more than two decades helping managed care organizations and health systems close the data quality gap. Our UniSync™ Healthcare Data Management Platform was purpose-built as the neutral data refinery that turns fragmented healthcare data into the trustworthy foundation AI needs. Our data centers are SOC 2 Type II attested. HIPAA compliant.

Sources

- U.S. Department of Health and Human Services, Assistant Secretary for Technology Policy/Office of the National Coordinator for Health Information Technology. Health Data, Technology, and Interoperability: ASTP/ONC Deregulatory Actions to Unleash Prosperity (HTI-5) Proposed Rule. Federal Register, December 29, 2025. https://www.federalregister.gov/documents/2025/12/29/2025-23896/health-data-technology-and-interoperability-astponc-deregulatory-actions-to-unleash-prosperity (Includes HHS rationale on lack of evidence for transparency requirements improving patient care and proposal to remove or revise over 50% of ONC certification criteria.)

- American Medical Informatics Association (AMIA). Public comment letter on the HTI-5 Proposed Rule, February 2026. (Cautioning that removing AI transparency criteria could introduce unintended risks.)

- Medical Group Management Association (MGMA). Comment letter on HTI-5, February 27, 2026. (Warning that practices will assume inappropriate risk and lose the only consistent mechanism for evaluating AI tools.)

- Workgroup for Electronic Data Interchange (WEDI). Comment letter on the ASTP/ONC HTI-5 Proposed Rule, February 2026. (Opposing broader changes to DSI certification requirements.)

- Epic Systems. Public comments and supporting data on HTI-5 (2025–2026). (Citing 2025 internal data showing 46% of customer organizations had zero users accessing source attributes in clinical workflows.)

- Seema Verma (Oracle Health). Public statements on HTI-5 burden reduction, referenced in industry coverage (December 2025–March 2026). (Describing the proposal as “highly encouraging.”)

- ASTP/ONC HTI-5 docket summaries. (Confirming more than 6,400 public comments received by the February 27, 2026 deadline.)

- U.S. Department of Health and Human Services, Office of Inspector General. Some Medicare Advantage Organization Denials of Prior Authorization Requests Raise Concerns About Beneficiary Access to Medically Necessary Care. OEI-09-18-00260, April 2022. https://oig.hhs.gov/oei/reports/OEI-09-18-00260/ (Documenting the 13% rate of prior authorization denials that met Medicare coverage rules.)

- White House. Executive Order: Ensuring a National Policy Framework for Artificial Intelligence (December 11, 2025). https://www.whitehouse.gov/presidential-actions/2025/12/eliminating-state-law-obstruction-of-national-artificial-intelligence-policy/ (Directing federal preemption considerations for state AI laws deemed inconsistent with national policy.)

- Key 2025–2026 state AI healthcare laws:

- Texas Responsible Artificial Intelligence Governance Act (TRAIGA / HB 149), effective January 1, 2026 (written patient disclosure for AI use in care).

- California AB 489 (prohibiting AI impersonation of licensed healthcare professionals) and related transparency provisions.

- Colorado AI Act (SB 24-205), phased implementation through June 2026 (governance and disclosure for high-risk AI).

- Illinois laws prohibiting independent AI decisions in therapy/psychotherapy.